Robotics is rapidly being transformed by advances in artificial intelligence.

And the benefits are widespread: We are seeing safer vehicles with the ability to automatically brake in an emergency, robotic arms transforming factory lines that were once off-shored and new robots that can do everything from shop for groceries to deliver prescription drugs to people who have trouble doing it themselves.

But our ever-growing appetite for intelligent, autonomous machines poses a host of ethical challenges.

Rapid advances have led to ethical dilemmas

These ideas and more were swirling as my colleagues and I met in early November at one of the world’s largest autonomous robotics-focused research conferences – the IEEE International Conference on Intelligent Robots and Systems. There, academics, corporate researchers, and government scientists presented developments in algorithms that allow robots to make their own decisions.

As with all technology, the range of future uses for our research is difficult to imagine. It’s even more challenging to forecast given how quickly this field is changing.

Take, for example, the ability for a computer to identify objects in an image: In 2010, the state of the art was successful only about half of the time, and it was stuck there for years.

Today, though, the best algorithms as shown in published papers are now at 86 percent accuracy. That advance alone allows autonomous robots to understand what they are seeing through the camera lenses. It also shows the rapid pace of progress over the past decade due to developments in AI.

This kind of improvement is a true milestone from a technical perspective. Whereas in the past manually reviewing troves of video footage would require an incredible number of hours, now such data can be rapidly and accurately parsed by a computer program.

But it also gives rise to an ethical dilemma. In removing humans from the process, the assumptions that underpin the decisions related to privacy and security have been fundamentally altered.

For example, the use of cameras in public streets may have raised privacy concerns 15 or 20 years ago, but adding accurate facial recognition technology dramatically alters those privacy implications.

Easy to modify systems

When developing machines that can make own decisions – typically called autonomous systems – the ethical questions that arise are arguably more concerning than those in object recognition.

AI-enhanced autonomy is developing so rapidly that capabilities which were once limited to highly engineered systems are now available to anyone with a household toolbox and some computer experience.

People with no background in computer science can learn some of the most state-of-the-art artificial intelligence tools, and robots are more than willing to let you run your newly acquired machine learning techniques on them. There are online forums filled with people eager to help anyone learn how to do this.

With earlier tools, it was already easy enough to program your minimally modified drone to identify a red bag and follow it.

More recent object detection technology unlocks the ability to track a range of things that resemble more than 9,000 different object types.

Combined with newer, more maneuverable drones, it’s not hard to imagine how easily they could be equipped with weapons.

What’s to stop someone from strapping an explosive or another weapon to a drone equipped with this technology?

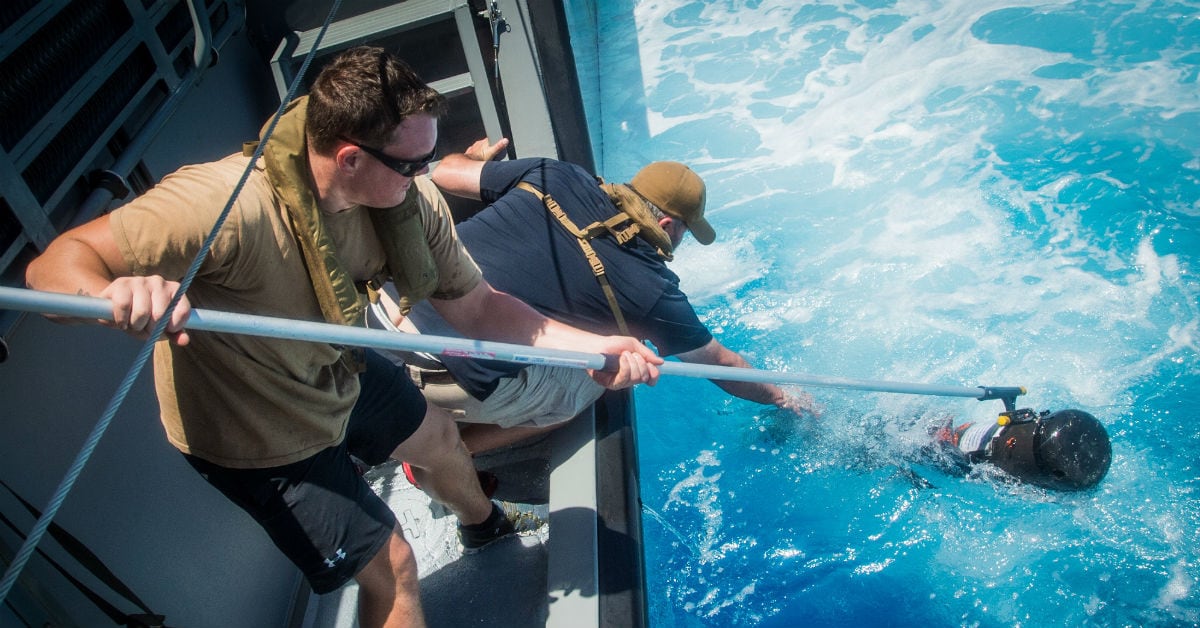

Using a variety of techniques, autonomous drones are already a threat. They have been caught dropping explosives on U.S. troops, shutting down airports and being used in an assassination attempt on Venezuelan leader Nicolas Maduro.

The autonomous systems that are being developed right now could make staging such attacks easier and more devastating.

Regulation or review boards?

About a year ago, a group of researchers in artificial intelligence and autonomous robotics put forward a pledge to refrain from developing lethal autonomous weapons.

They defined lethal autonomous weapons as platforms that are capable of “selecting and engaging targets without human intervention.”

As a robotics researcher who isn’t interested in developing autonomous targeting techniques, I felt that the pledge missed the crux of the danger. It glossed over important ethical questions that need to be addressed, especially those at the broad intersection of drone applications that could be either benign or violent.

For one, the researchers, companies and developers who wrote the papers and built the software and devices generally aren’t doing it to create weapons. However, they might inadvertently enable others, with minimal expertise, to create such weapons.

What can we do to address this risk?

Regulation is one option, and one already used by banning aerial drones near airports or around national parks.

Those are helpful, but they don’t prevent the creation of weaponized drones.

Traditional weapons regulations are not a sufficient template, either. They generally tighten controls on the source material or the manufacturing process.

That would be nearly impossible with autonomous systems, where the source materials are widely shared computer code and the manufacturing process can take place at home using off-the-shelf components.

Another option would be to follow in the footsteps of biologists.

In 1975, they held a conference on the potential hazards of recombinant DNA at Asilomar in California. There, experts agreed to voluntary guidelines that would direct the course of future work.

For autonomous systems, such an outcome seems unlikely at this point. Many research projects that could be used in the development of weapons also have peaceful and incredibly useful outcomes.

A third choice would be to establish self-governance bodies at the organization level, such as the institutional review boards that currently oversee studies on human subjects at companies, universities and government labs.

These boards consider the benefits to the populations involved in the research and craft ways to mitigate potential harms. But they can regulate only research done within their institutions, which limits their scope.

Still, a large number of researchers would fall under these boards’ purview – within the autonomous robotics research community, nearly every presenter at technical conferences are members of an institution. Research review boards would be a first step toward self-regulation and could flag projects that could be weaponized.

Living with the peril and promise

Many of my colleagues and I are excited to develop the next generation of autonomous systems. I feel that the potential for good is too promising to ignore. But I am also concerned about the risks that new technologies pose, especially if they are exploited by malicious people.

Yet with some careful organization and informed conversations today, I believe we can work toward achieving those benefits while limiting the potential for harm.

RELATED

Dr. Chris Heckman is an assistant professor in the Department of Computer Science at the University of Colorado at Boulder and the Jacques I. Pankove Faculty Fellow in the College of Engineering & Applied Science. His research focuses on autonomy, perception, field robotics, machine learning and artificial intelligence. He directs the Autonomous Robotics and Perception Group, a dynamic and close-knit research team aiming to develop practical and explainable techniques in probabilistic artificial intelligence. The author’s views do not necessarily reflect those of Navy Times or its staffers.